What Is Crawlability? Crawling, Indexing, and Ranking Explained

Hand off the toughest tasks in SEO, PPC, and content without compromising quality

Explore ServicesToday, I’ll tackle a concept at the heart of search engine optimization (SEO): crawlability.

By the end of this guide, here’s what we’ll have covered together:

- a no-BS breakdown of “What is crawlability,”

- an exploration of why optimizing for crawlability is a huge step toward SEO success,

- and, to sweeten the deal, I’ll even sprinkle in some handy tips and tricks to make your website the apple of every web crawler’s eye.

What Is Crawlability?

Crawlability refers to the capability of a search engine to access, interpret, and navigate through all the pages of a website. The process of crawling a website is carried out by automated algorithmic tools known as bots, spiders, or web crawlers (like Google’s Googlebot or Bing’s Bingbot), which browse the web to discover new or updated content—a process achieved by following internal and external links embedded within a website’s pages.

How a site is constructed, from the complexity of its HTML to the use of JavaScript, can either roll out the red carpet or set up roadblocks for search engine bots. So, optimizing crawlability means getting all website elements—website structure, meta tags, page loading times, and the instructions in robot.txt files—to work together harmoniously.

Learn more: Interested in broadening your SEO knowledge even further? Check out our SEO glossary, where we’ve explained over 250+ terms.

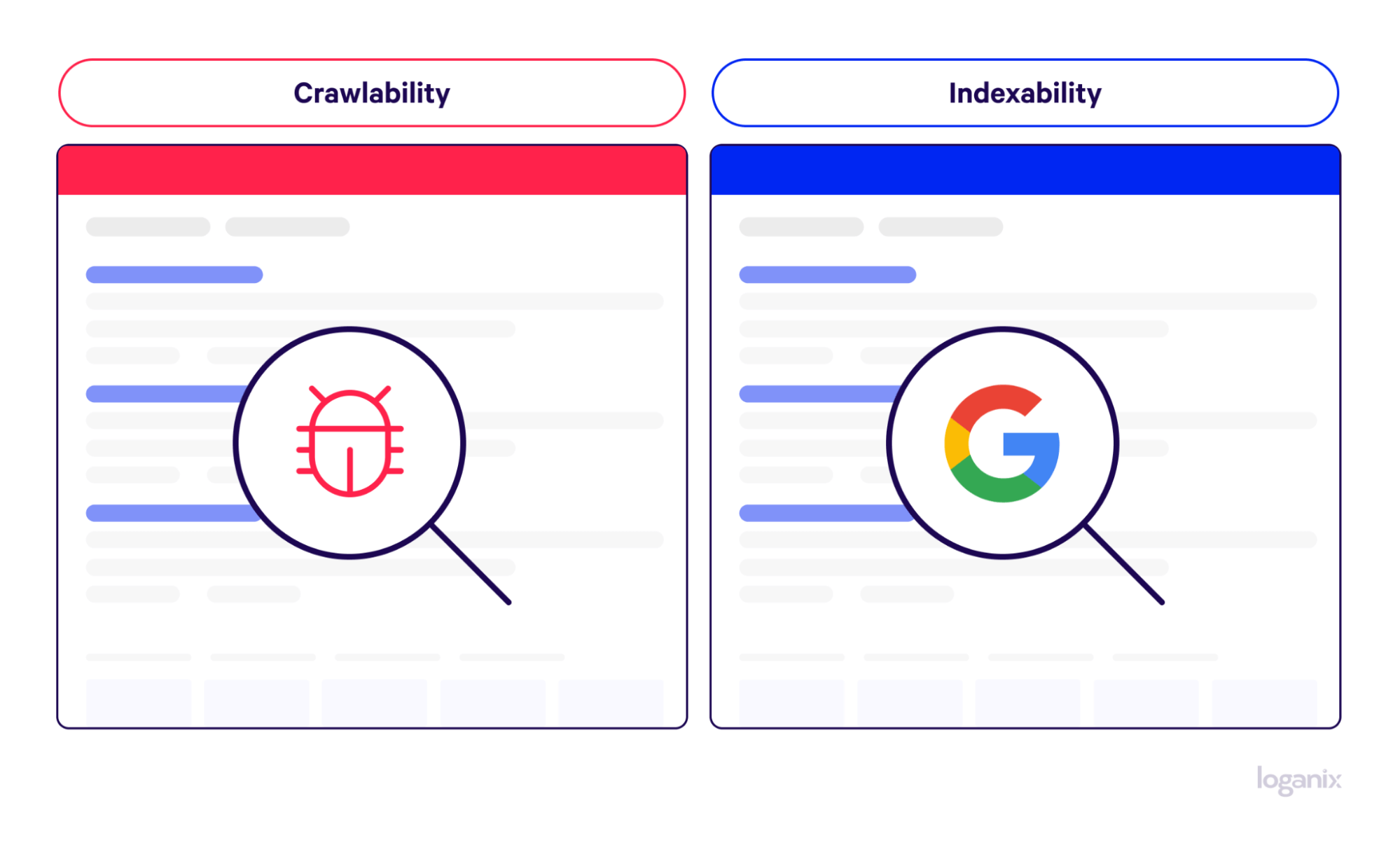

Crawlability vs. Indexability

Crawlability, as we’ve just discussed, is a concept centered around how easy it is for search engine crawlers to navigate and understand the various elements that make up a website.

Indexability takes the baton from crawlability.

Once a search engine bot has successfully crawled a website, a suite of search engine algorithms will make a decision on whether or not to add a web page to the search engine’s index—an enormous database where all crawled information is stored and later retrieved when a relevant search query is made. Indexing involves analyzing and categorizing each page based on its content, meta-data, keywords, and many more parameters.

Think of it as the crawler giving your site a thumbs up and saying, “Yep, this content is worthy of being found in search results.”

It should be noted that simply crawling a web page does not guarantee indexing. Certain conditions might render a page non-indexable, such as directives like “noindex” in meta tags or robots.txt files, poor quality or harmful content, or penalties for violating guidelines like Google’s Spam Policies.

Learn more: what is a crawl error?

Why Is Crawlability Important?

Now we’ve grasped the “what,” let’s tackle the “why.”

First Impressions Matter: The Gateway to Indexing

As we’ve touched on, crawlability is all about making a website as search engine bot-friendly as possible.

What makes a site easy to crawl?

It’s a mix of things like how your site is built (its site architecture), whether your links lead somewhere meaningful (hyperlink integrity), and how your website talks to the search engine (server responses).

When these elements are in tip-top shape, your site becomes a web crawlers’ paradise, making it more likely to be included in search engine results. That first impression—how easily a spider can crawl your site—is mighty important for getting your content noticed and indexed.

Visibility and Rankings: The SEO Spotlight

The easier it is for search engine bots to crawl a website, the better they can interpret your site’s relevance and authority on specific topics. A key understanding. Why? Because the more relevant and authoritative your site is perceived to be, the higher it’s likely to rank on search engine results pages (SERPs) and be noticed by the target audience.

Something that is known in SEO circles as topical relevance—the idea that a website’s page is evaluated not merely on keywords but on the ability to establish authority in a specific subject area.

But it’s not just about making a good impression. A crawl-friendly site means that every page, every update, and every piece of content gets noticed. A level of visibility that’s crucial for showing up in search results.

Keyword Optimization: Getting Found for the Right Reasons

If your website has issues like server errors, confusing navigation, or misused robots.txt files, then even the best keyword strategy can fall flat. Why? Because if search engine bots can’t find or understand your content, they can’t index it properly. And if it’s not indexed, it won’t appear in search results, no matter how good your content strategies are.

In short, good crawlability gives your keyword-rich content the best chance at ranking on the SERPs.

Navigational Ease: Setting Up a Clear Path

When a website has a logical layout, with pages organized in a clear hierarchy and linked in a way that makes sense, crawlers can quickly move from one page to another, helping them easily understand what a site is about and index its pages more effectively.

A well-organized website and good crawlability make it easier for search engines to discover and index web content. Once again, this leads to better visibility on the SERPs.

Supporting Your Website’s Crawlability

Now that we’ve got the basics down, let’s roll up our sleeves and dive into some practical tips to boost your website’s crawlability:

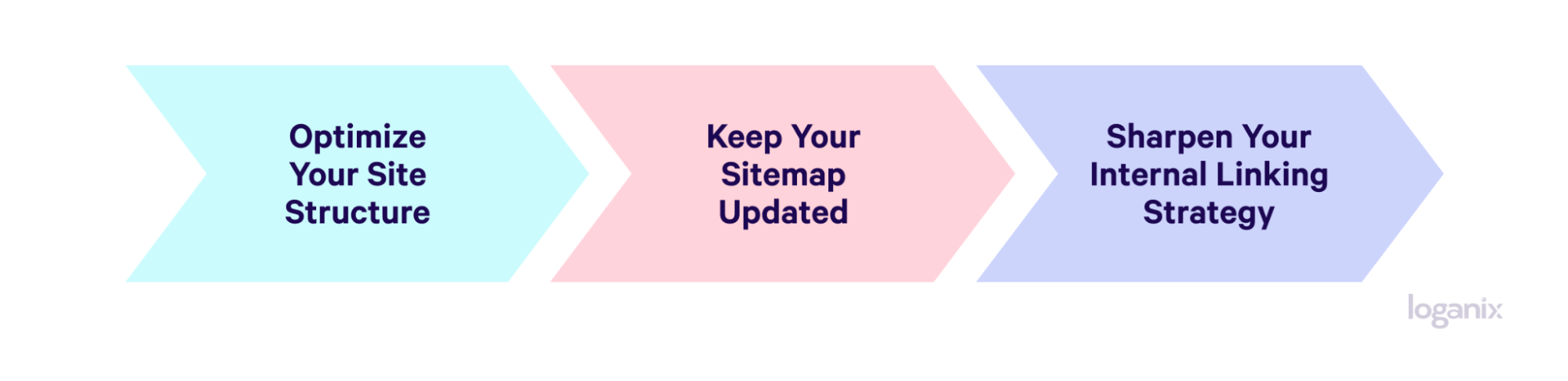

Optimize Your Site Structure

Here’s what you can do:

- Keep your most important pages no more than a few clicks away from the homepage.

- Use breadcrumb navigation to help bots understand your site hierarchy.

- Avoid deep nesting of pages—keep the structure intuitive and simple.

Keep Your Sitemap Updated

To make the most of a sitemap:

- Regularly update your XML sitemap and submit it to search engines.

- Ensure that your sitemap only includes canonical URLs and excludes duplicate pages.

- If your website is large, consider breaking your sitemap into smaller, categorized sitemaps for easier navigation.

Sharpen Your Internal Linking Strategy

To optimize your internal linking:

- Use descriptive anchor texts that give an idea of the linked page’s content.

- Link related content together to keep crawlers (and users) engaged.

- Regularly check for and fix any broken links, which can hinder crawlers.

Crawlability FAQ

Q1: Can a Web Page be Indexed Without Being Crawled?

Answer: While a web page typically cannot be properly indexed without being crawled, in rare cases, Google can index a URL without crawling it based on the URL and anchor text of its backlinks. However, in such instances, the page title and description may not appear in the search results.

Q2: How Often Should a Website Be Crawled for Optimal SEO Performance?

Answer: The frequency of website crawling varies, but factors like website updates, content relevance, and site authority generally influence it. For optimal SEO performance, regular content updates and site maintenance can encourage more frequent crawling.

Q3: How Does Crawlability Impact the Overall User Experience and Website Performance?

Answer: Good crawlability positively impacts user experience by ensuring all relevant pages are found and indexed, leading to better search visibility. It also potentially boosts website performance by enabling efficient content discovery and indexing, which is considered best practice.

Conclusion and Next Steps

Understanding crawlability is crucial to SEO success, but there’s so much more to an effective SEO strategy.

That’s where Loganix comes in.

With over a decade of experience in the SEO business, we offer a range of managed SEO services tailored to fit your needs, goals, and budget.

🚀 Explore Loganix’s SEO services and discover how we will help you grow your business and achieve your digital marketing goals. 🚀

Hand off the toughest tasks in SEO, PPC, and content without compromising quality

Explore ServicesWritten by Adam Steele on December 20, 2023

COO and Product Director at Loganix. Recovering SEO, now focused on the understanding how Loganix can make the work-lives of SEO and agency folks more enjoyable, and profitable. Writing from beautiful Vancouver, British Columbia.